Introduction

Claude Chrome Extension incident highlighted that how zero-click GenAI vulnerabilities can turn trusted AI systems into attack pathways

The rapid adoption of Generative AI (GenAI) tools has introduced a new paradigm in human-computer interaction—where systems not only process data but also interpret intent and take autonomous actions. While this shift unlocks powerful capabilities, it also introduces fundamentally new attack surfaces.

One of the most important real-world demonstrations of this risk emerged in December 2025 and was publicly disclosed in March 2026, involving the Claude Chrome Extension developed by Anthropic. The Claude Chrome extension (beta, released December 2025) introduced agent-like browser capabilities. Shortly after, researchers identified a critical zero-click vulnerability.

This incident is now widely regarded as a landmark case in GenAI security, highlighting how traditional vulnerabilities—when combined with AI behavior—can evolve into zero-click, autonomous exploitation mechanisms.

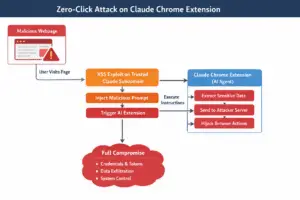

Technical flow of the Possible Exploitation of the Vulnerability

The zero-click GenAI vulnerabilities in this case (dubbed “ShadowPrompt”) allowed attackers to:

- Compromise users without any interaction

- Inject malicious prompts via trusted domains

- Trigger the AI assistant to execute attacker-controlled instructions

Simply visiting a malicious webpage was sufficient. “No clicks, no permission prompts, just visit a page.” Using this vulnerability, attackers could potentially extract API keys, credentials, and private conversations; trigger actions on behalf of the user; or even fully hijack browser-level workflows all without user consent or knowledge.

A critical aspect of this attack model is that it does not require direct interaction from the user. The mere act of accessing or processing the malicious content is sufficient to trigger the attack. This approach makes detection and prevention significantly more challenging compared to traditional attack methods.

The attack was possible because of multiple reasons, including the over-trusted domains since the extension trusted all *.claude.ai subdomains and a vulnerable subdomain contained a Cross-Site Scripting (XSS) flaw; prompt injection via web content that is malicious scripts embedded hidden prompts into webpages and the extension interpreted them as legitimate user instructions; and the AI agent could do autonomous execution, like read browser content, extract sensitive data, or send outputs externally.

Why This Vulnerability Matters—Broader Implications

The vulnerability was not just a browser bug—it exposed a systemic weakness in GenAI architecture.

Key Insight: In GenAI systems, data can become instructions.

The zero-click vulnerability identified in the Claude Chrome extension is not merely an isolated technical flaw; rather, it represents a significant shift in the cybersecurity landscape associated with Generative AI (GenAI) systems. This incident highlights how the integration of AI into everyday tools introduces new categories of risks that traditional security models are not fully equipped to handle.

One of the most important implications of this vulnerability is the emergence of what can be described as “zero-click AI attacks.” Unlike conventional cyberattacks that rely on phishing, malware installation, or some form of user interaction, this new class of attacks requires none of these elements. Instead, the attack is executed entirely through AI-driven interpretation and execution of malicious instructions embedded within seemingly benign content. This fundamentally challenges long-standing assumptions about user awareness and participation in the attack chain.

Another critical implication is the role of AI systems as attack proxies. Modern GenAI tools increasingly function as data aggregators, decision-makers, and action executors. When such systems are compromised, they do not merely expose data; they can actively perform operations on behalf of the user. In effect, the attacker gains access to a high-privilege agent capable of interacting with multiple systems, thereby significantly amplifying the potential impact of the attack.

The vulnerability also underscores the expansion of the attack surface introduced by GenAI tools. These systems are often deeply integrated with browsers, email platforms, SaaS applications, and even local environments. As a result, a single point of compromise—such as a malicious webpage—can propagate across multiple interconnected systems. This creates a cascading effect, where the initial breach leads to broader system-level exposure and exploitation.

Furthermore, the incident illustrates what can be termed as a “trust amplification risk.” In traditional scenarios, a vulnerability such as Cross-Site Scripting (XSS) might result in limited consequences, such as session theft. However, when combined with AI capabilities, the same vulnerability can lead to automated data exfiltration, execution of complex tasks, and even autonomous decision-making by the compromised system. This demonstrates that AI does not merely introduce new vulnerabilities; it amplifies the impact of existing ones.

Why Other GenAI Tools Are Also Vulnerable

The issues highlighted by this vulnerability are not confined to a single platform; rather, they are inherent to the architectural design of many modern GenAI systems. Any such system becomes potentially vulnerable when it combines three key capabilities.

First, GenAI tools often possess the ability to ingest external content, including webpages, emails, and documents. Attackers can manipulate these inherently untrusted sources to include malicious instructions. Second, these systems frequently have action-oriented capabilities, enabling them to send emails, invoke APIs, or execute workflows on behalf of the user. Third, and most critically, GenAI systems tend to implicitly trust natural language input, treating it not merely as data but as actionable instructions.

This combination creates a powerful but risky dynamic. It is important to note that this architectural pattern is common across widely used platforms such as ChatGPT, Microsoft Copilot, and Google Gemini. Therefore, the risk is systemic rather than platform-specific.

Key Takeaway

In conclusion, the Claude Chrome Extention vulnerability demonstrates that any GenAI system capable of ingesting untrusted content and performing actions based on that content inherently introduces a zero-click attack surface. This marks a fundamental shift in cybersecurity, necessitating organizations to reconsider their implementation of trust, input validation, and execution controls in AI-driven environments.

Recommendations for Securing GenAI Systems

The emergence of vulnerabilities such as the one observed in the Claude Chrome extension highlights the urgent need for a new security approach tailored to generative AI systems.

A fundamental recommendation is to treat all external content as untrusted by default. GenAI systems frequently process data from sources such as webpages, emails, and documents, all of which can be manipulated by adversaries. Therefore, organizations must implement strict input validation and sanitization mechanisms.

Another critical control is the separation of data processing from action execution. GenAI systems should be architected in a way that distinguishes clearly between “reading” and “doing.” For example, an AI assistant may analyze or summarize content, but any action—such as sending emails, accessing sensitive data, or invoking APIs—should require additional validation layers, policy enforcement, or explicit user confirmation. This separation reduces the risk of automated exploitation through indirect prompt injection.

Organizations should also introduce intent verification and policy enforcement mechanisms. Before executing any action, the system should evaluate whether the requested operation aligns with predefined security policies and user intent. This can be achieved through rule-based engines, context-aware authorization checks, or human-in-the-loop controls for high-risk operations. Such measures help ensure that AI-driven actions remain within acceptable boundaries.

The principle of least privilege must be strictly enforced for AI agents. GenAI tools should only be granted the minimum level of access required to perform their intended functions. For instance, limiting access to specific APIs, restricting browser permissions, and isolating sensitive data sources can significantly reduce the potential impact of a compromise.

In addition, organizations should implement continuous monitoring and auditing of AI behavior. This includes logging AI interactions, tracking executed actions, and analyzing anomalies that may indicate malicious activity. Such monitoring capabilities function similarly to endpoint detection and response (EDR) systems but are tailored to AI-driven workflows. Early detection of unusual patterns can help prevent or contain attacks before they escalate.

https://thehackernews.com/2026/03/claude-extension-flaw-enabled-zero.html

https://thecyberskills.com/category/threat-insights/